From research experiment to the platform people depend on

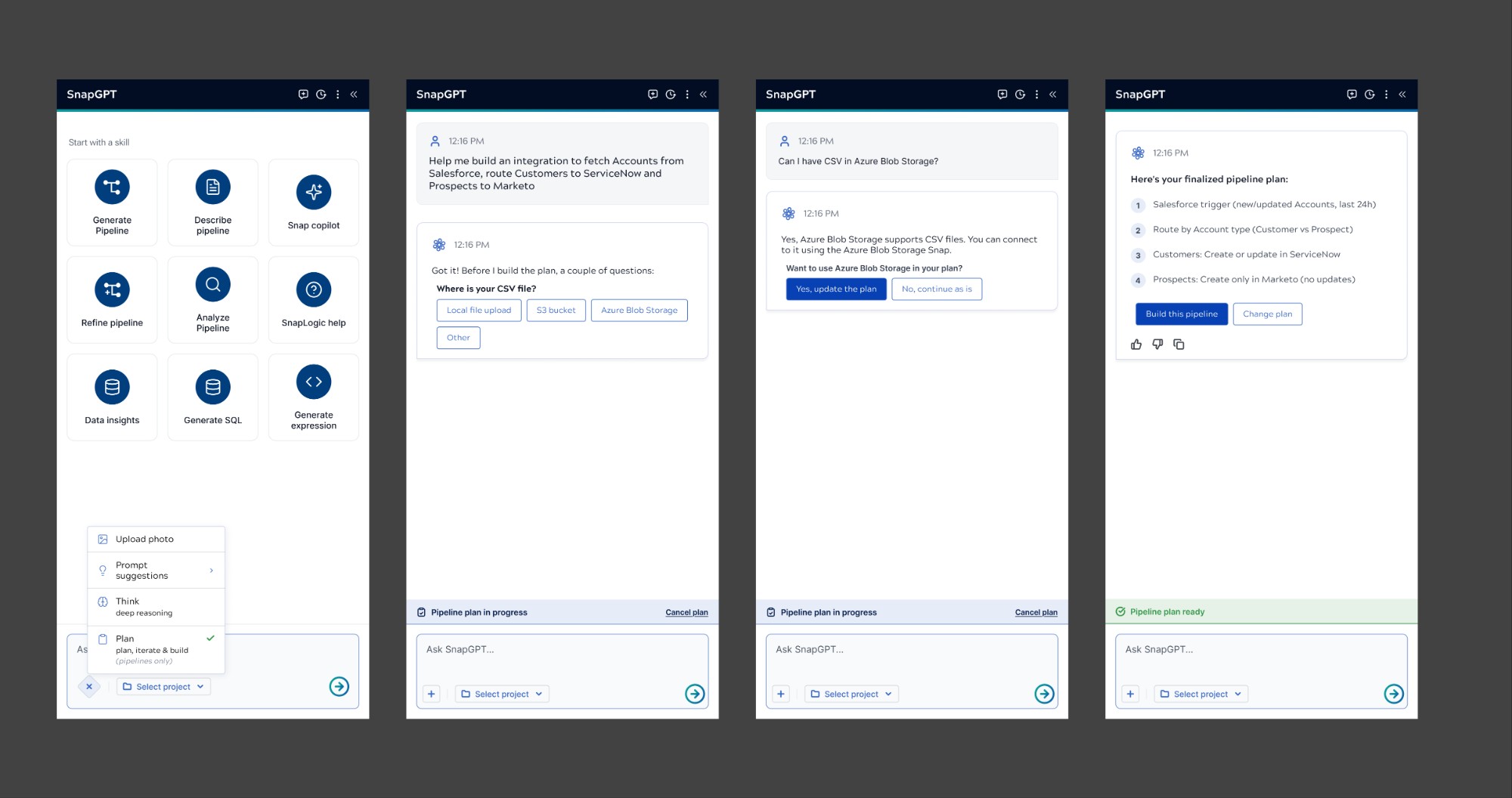

When I inherited SnapGPT, it was a research project. Built by the chief data scientist and an offshore development team, no design involvement, no user testing, no product integration. It lived at the edge of the platform, separate from the core experience, and users didn't know it existed.

Over two years, I redesigned it from the ground up. Not just the interface, the conceptual model. What is this for? Who is it for? What should they be able to do with it that they couldn't do before?

The answer became a complete skill ecosystem: pipeline documentation, pipeline analysis, intelligent pipeline building, research assistance, contextual help, snap configuration. Each capability designed for a specific user need, progressively disclosed so users could discover the system's depth without being overwhelmed by it.

The metric I cared about most wasn't adoption. It was the moment when a user said "I didn't know it could do that", and came back to try something harder the next day. That's the signal that trust has been established: not passive acceptance, but expanding engagement.

Simultaneously, I expanded the design vision beyond a chatbot, positioning SnapLogic as a governed orchestration fabric for enterprise AI execution. That meant designing for Agent Creator (including Agent Visualizer and Prompt Composer), MCP Servers and Tools, governance surfaces, and audit interfaces that give human administrators meaningful oversight of autonomous AI action. The research experiment became the platform. The platform became the strategy.

SnapGPT · Agent Creator · Agent Visualizer · Prompt Composer · MCP Servers & Agent Tools · Designer Canvas · Admin Manager · Governance Surfaces · Expression Builder · Agent Visualizer & Execution Replay